①进入到kafka文件夹中修改配置文件:vim config/server.properties

②启动zookeeper:

bin/zookeeper-server-start.sh config/zookeeper.properties

端口2181是ZooKeeper的默认端口,可以通过编辑文件config/zookeeper.properties 中的clientPort来修改监听端口。

③ 启动Kafka Broker

bin/kafka-server-start.sh config/server.properties

④ 创建一个Topic 名称为HelloWorld

bin/kafka-topics.sh –create –zookeeper localhost:2181 –replication-factor 1 \

> –partitions 1 –topic HelloWorld

⑤校验Topic是否创建成功

bin/kafka-topics.sh –list –zookeeper localhost:2181

⑥配置pom文件:

<!– https://mvnrepository.com/artifact/org.apache.kafka/kafka –>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>0.11.0.0</version>

</dependency>

⑦编写一个producer文件:

package com.winter.kafka;

import java.util.Properties;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

public class ProducerDemo {

public static void main(String[] args){

Properties properties = new Properties();

properties.put(“bootstrap.servers”, “47.91.214.23:9092”);

properties.put(“acks”, “all”);

properties.put(“retries”, 0);

properties.put(“batch.size”, 16384);

properties.put(“linger.ms”, 1);

properties.put(“buffer.memory”, 33554432);

properties.put(“key.serializer”, “org.apache.kafka.common.serialization.StringSerializer”);

properties.put(“value.serializer”, “org.apache.kafka.common.serialization.StringSerializer”);

Producer<String, String> producer = null;

try {

producer = new KafkaProducer<String, String>(properties);

// producer.send(new ProducerRecord<String, String>(“HelloWorld”,”aaaaaaaaaaaa”));

for (int i = 0; i < 100; i++) {

String msg = “This is Message ” + i;

producer.send(new ProducerRecord<String, String>(“HelloWorld”, msg));

System.out.println(“Sent:” + msg);

}

} catch (Exception e) {

e.printStackTrace();

} finally {

producer.close();

}

}

}

⑧配置一个customer文件:

package com.winter.kafka;

import java.util.Arrays;

import java.util.Properties;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

public class ConsumerDemo {

public static void main(String[] args) throws InterruptedException {

Properties properties = new Properties();

properties.put(“bootstrap.servers”, “47.91.214.23:9092”);

properties.put(“group.id”, “group-1”);

properties.put(“enable.auto.commit”, “true”);

properties.put(“auto.commit.interval.ms”, “1000”);

properties.put(“auto.offset.reset”, “earliest”);

properties.put(“session.timeout.ms”, “30000”);

properties.put(“key.deserializer”, “org.apache.kafka.common.serialization.StringDeserializer”);

properties.put(“value.deserializer”, “org.apache.kafka.common.serialization.StringDeserializer”);

KafkaConsumer<String, String> kafkaConsumer = new KafkaConsumer<>(properties);

kafkaConsumer.subscribe(Arrays.asList(“HelloWorld”));

while (true) {

ConsumerRecords<String, String> records = kafkaConsumer.poll(100);

for (ConsumerRecord<String, String> record : records) {

System.out.printf(“offset = %d, value = %s”, record.offset(), record.value());

System.out.println(“=====================>”);

}

}

}

}

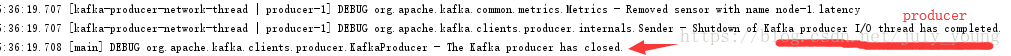

先动customer 文件,然后启动producer文件,然后观察控制台:

————————————————

版权声明:本文为CSDN博主「july_young」的原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/july_young/article/details/81704876